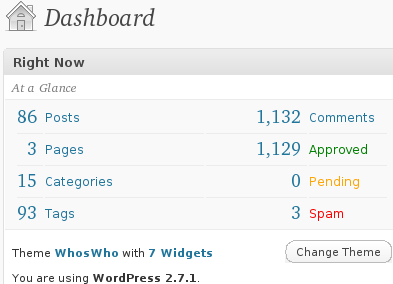

Those who are regular visitors to dsplog.com might have noticed the small FeedBurner chicklet on the side showing subscriber count showing 1000+ subscribers. Its a nice milestone to reach, one that looked so distant when I wrote the first post stating the objective of this blog on 26th February 2007. We now have around 86 articles with 1100+ comments.

Brief History

- First post : Objective February 26th 2007

- Migration from Blogger to to self hosted domain @ dsplog.com : October 2007

- Migration to Deep Blue theme : May 4th 2008

- Availability of free e-Book on Error Rates in AWGN : October 1st 2008

- Migration to Who’s Who theme : January 13th 2009

Subscribe

The rate of new subscribers improved dramatically once I introduced the free e-Book in October of 2008.

Joining the email subscription list entitles you to receive the free e-Book on Probability of Error for BPSK/QPSK/16QAM/16PSK/64QAM in AWGN.

Subscribe and download the free e-Book

Alternatively, you may subscribe to the RSS feed by clicking here.

Future

While writing the posts, making the simulations to work and responding to all those comments, I learned a lot over the last two years. The signalprocessing for wireless comunication is a vast area and we have only just briefly started. I hope to continue to discuss topic relavent to signal processing for comuniction.

I am hoping that reaching the next 1000 subscribers wont take another two years, and for achieveing that I need your help. If each of you can tell atleast five of your friends/colleagues about this blog, it will go a long way in spreading the word about the blog. Thanks in advance.

Other thoughts

Over the past 6 to 8 weeks, I know that am guilty of not keeping up with the one past per week target which I have set myself nor am able to respond to comments within 2-3 days. I am not able to squeeze sufficient time for blogging after office work and parenting. I hope to come back to one post per week cycle from the second week of April 2009.