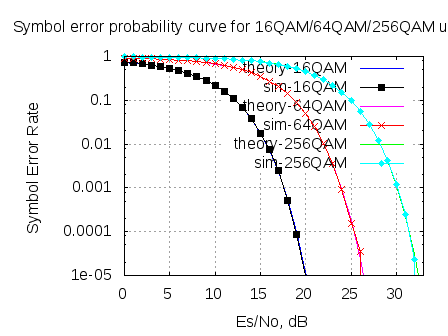

In May 2008, we derived the theoretical symbol error rate for a general M-QAM modulation (in Embedded.com, DSPDesignLine.com and dsplog.com) under Additive White Gaussian Noise. While re-reading that post, felt that the article is nice and warrants a re-run, using OFDM as the underlying physical layer. This post discuss the derivation of symbol error rate for a general M-QAM modulation. The companion Matlab script compares the theoretical and the simulated symbol error rate for 16QAM, 64QAM and 256QAM over OFDM in AWGN channel.

Enjoy and HAPPY NEW YEAR 2012 !!!

Continue reading “Symbol Error rate for QAM (16, 64, 256,.., M-QAM)”