Most of real world datasets have class imbalance, where a “majority” class dwarfs the “minority” samples. Typical examples are – identifying rare pathologies in medical diagnosis or flagging anomalous transactions to detect fraud or detecting sparse foreground objects from vast background objects in computer vision to name a few.

The machine learning models we have discussed – binary classification (refer post Gradients for Binary Classification with Sigmoid) or multiclass classification (refer post Gradients for multi class classification with Softmax) needs tweaks to learn from these imbalanced datasets. Without these adjustments, the models can “cheat” by favouring the majority class and can report a pseudo high accuracy though the class specific accuracy is low.

Different strategies have emerged over the years, and in this article we are covering the approaches listed below.

- Weighted cross entropy

- Foundational baseline, where a class-specific weight factor to the standard cross-entropy loss to weight the loss based on frequency of the class.

- Focal Loss for Dense Object Detection, Lin et al. (2017)

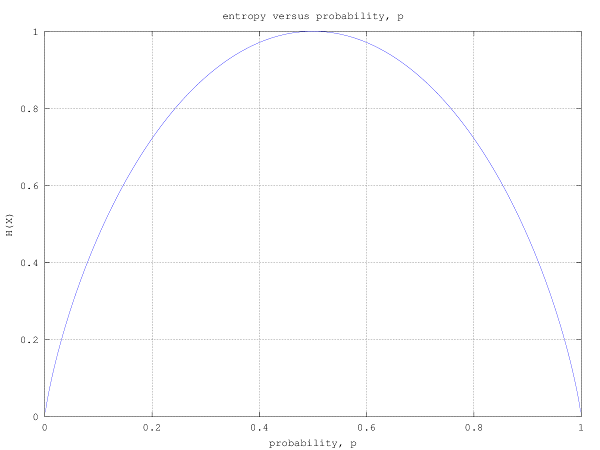

- Propose a modulating factor

to the cross-entropy loss to down-weight easy/frequent examples which indirectly forces the model to focus on hard/rare examples

- Propose a modulating factor

- Asymmetric Loss for Multi-Label Classification, Ridnik et al. (2021)

- Extended the intuition of Focal Loss by having independent

hyper-parameter for positive and negative samples. This allows for more aggressive “pushing” of easy/frequent examples while preserving the gradient signal for hard/rare samples.

- Additionally, authors introduces a probability margin that explicitly zeros out the loss from easy/frequent samples.

- Extended the intuition of Focal Loss by having independent

- Class-Balanced Loss Based on Effective Number of Samples, Cui et al. (CVPR 2019)

- Based on the intuition that there are similarities among the samples, authors propose a framework to capture the diminishing benefit when more datasamples are added to a class.

- Long-tail Learning via Logit Adjustment, Menon et al. (ICLR 2021)

- Based on the foundations from Bayes Rule, authors propose that adding a class dependent offset based on the prior probabilities help the model learn to minimise the balanced error rate (the average of error rates for each class) instead of minimising global error rate.