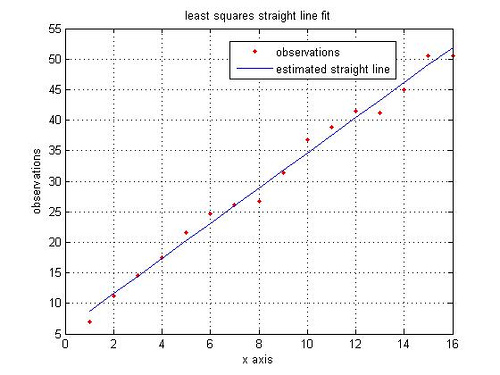

From the post on Closed Form Solution for Linear regression, we computed the parameter vector which minimizes the square of the error between the predicted value

and the actual output

for all

values in the training set. In that model all the

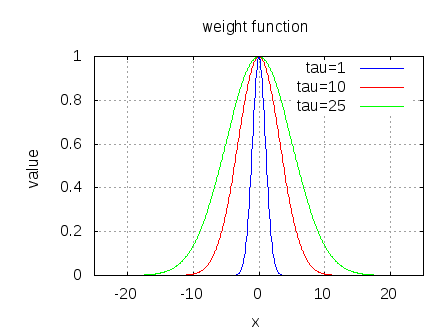

values in the training set is given equal importance. Let us consider the case where it is known some observations are important than the other. This post attempts to the discuss the case where some observations need to be given more weights than others (also known as weighted least squares).

Continue reading “Weighted Least Squares and locally weighted linear regression”